Introduction

The year 2024 will go down in history as the advent or the very beginning of mainstream AI. As organizational leadership braces with all the information around artificial intelligence (AI), they are also under tremendous pressure to drive innovation and gain a competitive edge.

Chief Data Officers (CDOs), Chief Information Officers (CIOs), Vice Presidents (VPs) or just about any other leader who uses data within the IT or the business operations team now face a pivotal challenge:

How to derive value from AI?

It has become very quickly apparent that AI is only as good as the data that is feeding it, Good data-in, high value from AI, high valued prediction engines, high performing AI agents, bots etc. One can only imagine the impact of bad data, misaligned data or just about any skew of data that makes its way into the AI engines.

AI is like the gas tank or charging outlet of your favorite electric car; imagine the impact of even a glass of water going into either the tank or charging outlet. Get the picture?

High-quality data is not just a technical term for clean data; the value of data is a strategic asset that determines the success of AI initiatives.

This guide explores the critical role of data quality in AI, highlighting actionable strategies for data managers at all levels and roles within the data organization to align data governance practices with business objectives and leverage AI tools to enhance data quality.

The Role of Data Quality in AI Success

AI models are going to become a commodity – they already are almost there. Many of the large organizations such as Google, Facebook, OpenAI and many others have dozens of AI models sometimes doing the same things differently.

AI models are still evolving in accuracy and have a ways to go before becoming fully autonomous.

One aspect that will always remain is that: AI models are only as good as the data they are trained on. Poor data quality in the model—characterized by inaccuracies, inconsistencies, and incompleteness—can lead to:

- Skewed Insights: Biased or incorrect data distorts AI predictions, undermining trust in AI-driven decisions.

- Inefficient Processes: Models require significant retraining and adjustments when data issues are discovered too late.

- Missed Opportunities: Faulty data can result in missed patterns or trends that drive business innovation.

Data as the Foundation: Due to the reliance of accurate, complete and high quality data, AI models can not only lead to inaccurate AI outputs but can also impact business value. Poor data quality can result in significant financial losses including missed opportunities and reputational / brand damage.

Data leaders must recognize that addressing data quality upfront is crucial for maximizing AI’s potential.

Key Aspects of Data Quality for AI

1. The Impact of Poor Data on AI Outcomes

- Bias and Discrimination: Erroneous data introduces biases, leading to unethical or non-compliant AI decisions.

- Reduced Model Accuracy: Inaccurate data undermines the reliability of AI models, making them ineffective.

- Increased Costs: Rectifying data issues after model deployment requires significant time and resources.

2. Prioritizing Data Quality Initiatives

- Align with Business Objectives: Tie data quality goals to measurable business outcomes, such as improved customer satisfaction or operational efficiency.

- Establish Clear Metrics: Define success criteria for data quality, such as accuracy rates, timeliness, and completeness levels.

- Cross-Functional Collaboration: Involve stakeholders from IT, analytics, and business units to align data quality efforts across the organization.

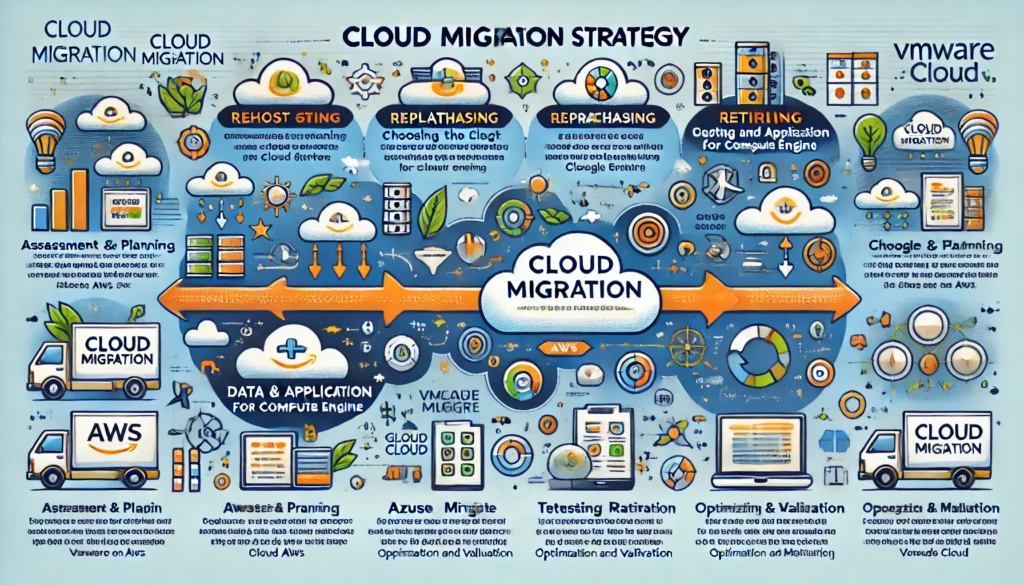

3. Leveraging AI to Enhance Data Quality

- AI-Powered Data Cleansing: Use machine learning algorithms to identify and correct errors in datasets, such as duplicates or missing values.

- Anomaly Detection: Employ AI tools to detect outliers and inconsistencies in real time.

- Data Enrichment: Enhance datasets with external or supplementary data sources using AI-driven matching and integration techniques.

Building Robust Data Governance Practices

CDOs play a critical role in establishing a governance framework that supports data quality and AI success. Key components include:

- Data Ownership and Stewardship

- Assign accountability for data assets across the organization.

- Ensure data stewards actively monitor and maintain data quality.

- Policy Development

- Develop policies for data creation, validation, and usage.

- Enforce adherence to regulatory standards such as GDPR or CCPA.

- Continuous Monitoring and Feedback Loops

- Implement tools for real-time data quality monitoring.

- Use AI-driven analytics to continuously refine and improve data processes.

Driving Informed Business Decisions with AI and Quality Data

With high-quality data, AI models can:

- Deliver Actionable Insights: Reliable data enables accurate predictions and decision-making.

- Enhance Customer Experiences: Personalization and targeted strategies become more effective.

- Optimize Operations: AI-powered tools drive efficiency and reduce costs when powered by consistent and clean data.

Key Takeaways for Data Leaders

- Invest in Data Quality: Prioritize initiatives that align with AI goals and business outcomes.

- Leverage AI for Data Management: Use AI tools to automate cleansing, validation, and monitoring tasks.

- Establish Governance Frameworks: Ensure accountability, policies, and continuous monitoring to maintain data integrity.

- Promote a Data-Driven Culture: Foster collaboration and awareness across teams about the strategic importance of data quality.

At Acumen Velocity, our data quality practitioners have helped some of the largest organizations implement robust data quality initiatives. We are tool agnostic, process intensive and pride ourselves with providing the best fitment of the technological elements to the appropriate business aspects and aligning with organizational goals.

Contact us for a Free, no obligation initial assessment of your organizational data quality, we can help your team craft the right quality initiatives to ensure that your data will be empowered to take on the AI challenges that you are tasked with.